The roles and meaning of logic

Logic is the calculus of truth.As both the metalanguage of mathematics and an object of study within mathematics, it is important to be able to distinguish the two roles of logic and their respective notations.

When used as a metalanguage for mathematics, logic takes the form of crisp, standardized English. When logical statements are being studied as mathematical objects in their own right, symbolic forms of quantifiers, relations and connectives are appropriate.

Being able to translate between the forms is a critical part of logical literacy:

means ``

means `` and

and  .''

.''

means ``

means `` or

or  .''

.''

means ``it is not the case that

means ``it is not the case that  .''

.''

-

means ``

means `` implies

implies  .''

.''

means ``

means `` if and only if

if and only if  .''

.''

-

means ``for each object

means ``for each object  , it is the case that

, it is the case that  .''

.''

-

means ``there exists an object

means ``there exists an object  such that

such that  .''

.''

Statements

Logic forms complex statements from simpler ones.

In mathematics, equations are the most common simple statements. But, any relation (e.g., less than, greather than or equal, subset-inclusion) can form a mathematical statement.

More generally, any set can form the basis of a relation.

When a set ![]() is used as a relation, the notation

is used as a relation, the notation ![]() is the same as

is the same as ![]() .

.

The following are all simple statements:

- The sum of three and four is seven.

.

.

is less than

is less than  .

.

.

.

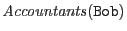

- Bob is an accountant.

-

.

.

Confusing the two roles of logic

In mathematical prose, it is jarring to see the symbolic forms in lieu of their English counterparts.Consider the following two formulations of the same theorem:

Theorem

![]() .

.

Theorem For every integer ![]() greater than 1, there exist two distinct

integers

greater than 1, there exist two distinct

integers ![]() and

and ![]() such that the product of

such that the product of ![]() and

and ![]() is

is ![]() .

.

Which formulation reads more clearly?

[Niggling detail In the first formulation, how do we know that the numbers involved are integers? How do we even know they're numbers?

We don't, unless the ``universe of discourse'' is specified.

The universe of discourse determines what kinds of values variables and expressions may take.

In this case, the universe of discourse is implicitly assumed to be integers. In some texts, it's explicitly specified; in others, it must be inferred from context. To be clear and context-insensitive, one could specify the range of every variable each time, as in this third formulation:

Theorem

![]() .

.

Whether one specifies the universe of discourse, leaves it to be inferred, or specifies the range of each variable must be made in light of the tradeoffs between clarity and precision for the reader.]

Going in the other direction, however, it isn't as awkward (even if it is uncommon). One could use English connectives in place of symbolic connectives--especially when the connectives are bold-faced, e.g., and, or.

The meaning of and, or and not

For the statement ``

There are two ors in logic, and the default or is

inclusive: ``![]() or

or ![]() '' is true when

'' is true when ![]() is true, when

is true, when ![]() is true and

when

is true and

when ![]() and

and ![]() are both true.

In other words, ``

are both true.

In other words, ``![]() or

or ![]() '' is false only when both

'' is false only when both ![]() and

and ![]() are false.

are false.

The other or is exclusive-or (or xor); ``![]() xor

xor

![]() '' is true only when

'' is true only when ![]() is true and

is true and ![]() is false, or when

is false, or when ![]() is false but

is false but

![]() is true.

Exclusive-or is typically written ``either

is true.

Exclusive-or is typically written ``either ![]() or

or ![]() .''

In some texts, the symbol

.''

In some texts, the symbol ![]() denotes exclusive or, but this notation is

not universal.

denotes exclusive or, but this notation is

not universal.

Logical negation (prefixing a statement with the symbol ![]() or ``it is not the case

that'') flips the truth value of a statement from true to false or false to

true.

or ``it is not the case

that'') flips the truth value of a statement from true to false or false to

true.

In most logics, double negation self-eliminates:

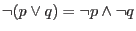

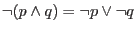

DeMorgan's laws express the relationship between and, or and not:

-

;

;

-

.

.

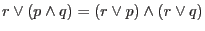

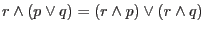

And and or also distribute across each other:

-

;

;

-

.

.

Truth tables for and and or

A truth table enumerates all possible values of a logical expression based on

the value of its operands.

A truth table gives a precise, formal meaning to a logical connective.

For example, the truth table for the or-connective ![]() is:

is:

| false | false | false |

| false | true | true |

| true | false | true |

| true | true | true |

Likewise, the truth table for the and-connective ![]() is:

is:

| false | false | false |

| false | true | false |

| true | false | false |

| true | true | true |

The truth table for the xor-connective ![]() is:

is:

|

|

||

| false | false | false |

| false | true | true |

| true | false | true |

| true | true | false |

The meaning of implication

Many understand implication intuitively, yet find its symbolic formulation puzzling.

The statement ``![]() implies

implies ![]() '' has several equivalent interpretations:

'' has several equivalent interpretations:

- if

, then

, then  ;

;

follows

follows  ;

;

entails

entails  ;

;

is sufficient for

is sufficient for  .

.

Bidirectional implication, or logical equivalence, also has equivalent formulations.

The statement ``![]() if and only if

if and only if ![]() '' is the same as:

'' is the same as:

iff

iff  ;

;

is necessary and sufficient for

is necessary and sufficient for  .

.

Alternate notations for implication

Expressing implications in inference-rule notation is also popular: |

means ``if

|

means ``

The contrapositive

It's easy to prove that

![]() is true if and only if

is true if and only if

![]() is true:

is true:

Truth tables for implication

The truth table for implication

![]() is:

is:

|

|

||

| false | false | true |

| false | true | true |

| true | false | false |

| true | true | true |

The truth table for bidirectional implication ![]() is:

is:

| false | false | true |

| false | true | false |

| true | false | false |

| true | true | true |

Quantifiers

The symbols ![]() and

and ![]() are quantifiers.

The symbol

are quantifiers.

The symbol ![]() is the universal quantifier because it claims

something for every element in the universe; while the symbol

is the universal quantifier because it claims

something for every element in the universe; while the symbol ![]() is the

existential quantifier because it claims the existence of an object that

satisfies a claim.

is the

existential quantifier because it claims the existence of an object that

satisfies a claim.

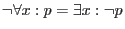

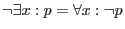

DeMorgan's laws generalize to quantifiers:

-

;

;

-

.

.

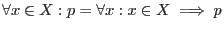

There are subtle notational variations on quantifier forms in symbolic logic:

-

means ``for each

means ``for each  in the set

in the set  , it is the case that

, it is the case that  .''

.''

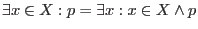

-

means ``there exists an

means ``there exists an  in the set

in the set  such that

such that  .''

.''

-

;

;

-

.

.

In some texts, the colon ![]() is left off quantifiers.

is left off quantifiers.

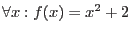

Implicit universal quantification

Consider the following statements:

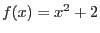

is the function that squares its argument and adds two.

is the function that squares its argument and adds two.

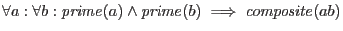

-

.

.

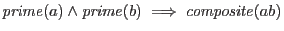

is prime and

is prime and  is prime implies that

is prime implies that  is composite.

is composite.

-

.

.

-

.

.

-

.

.

Rules of inference

In logic, rules of inference allow true statements to be transformed into new true statements. The application of a sequence of rules of inference is what constitutes a formal proof. Being aware of rules of inference, and the most common rules, acts as nice supplement to logical literacy.

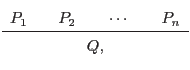

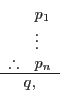

Rules of inference may be expressed in the following form:

|

or as:

|

and such a rule means, ``if

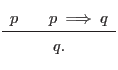

The most frequently applied rule of inference is modus ponens:

|

In short, modus ponens says that if we know

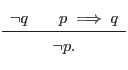

Modus tollens, for instance, works in reverse:

|

If we know that

Wikipedia provides a good summary of the rules of inference.

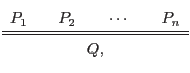

Sound rules of inference

For a rule of inference:

|

to be sound,

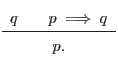

Logical fallacies

A logical fallacy is a would-be rule of inference which is not always true. A common fallacy is affirming the consequent; that is, assuming:

|

It's a good exercise to prove that this rule is a fallacy by showing it does not always reduce to true.

What's next?

With respect to logic's role as a metalanguage in mathematical prose, this post covers the essential vocabulary for technical reading.

I glossed over the nitty gritty that should be common sense, like the idempotent laws (e.g., p or p is just p), the commutative laws and the identity laws. Any textbook on logic will cover these.

For most, the next step is to learn how to read and write proofs.

If you're interested in getting deeper into symbolic logic, the first rabbit hole starts with relations and formulae, continues through entailment, satisfication, derivation, validity, consistency, soundness and completeness, and ends with models, theories and incompleteness.

More resources

- My recommended reading for grad students.

-

Harry Gensler's

Introduction to Logic

is the best introduction to logic and proofs that I know of. This is probably because it was written for philosophers instead of mathematicians.

-

Mathematical Logic

, on the other hand, is a punishing read for non-mathematicians. That said, it is a complete, unified development of all of the foundational concepts in symbolic logic (including Gödel's incompleteness theorems). As a reference for the working formal mathematician, it is an indispensible resource.

-

Gödel's Theorem: An Incomplete Guide to Its Use and Abuse

is the best lay reader's overview of Gödel's incompleteness theorems that I know of.