My server

I've been a satisfied linode customer for about seven years.

I've always had the cheapest plan (about $200/year), and because it's a virtual server, it automatically upgrades for free whenever the cheapest plan gets more memory, disk space or bandwidth.

Right now, that means 512 MB RAM, 24 GB disk and 200 GB/month bandwidth.

Step -1: Detect the surge

I keep tabs on my site through an app for Google Analytics on the iPhone.

Unfortunately, Google Analytics failed to detect the surge: page load time was so high that visitors were closing the page before analytics could load.

As a result, my analytics showed almost no increase in page views.

It took me about fifteen hours to realize my site was crushed.

I now have a cron job running to watch for stress on might.net.

It looks at the httpd log file every five minutes, and it sends me email when requests per second exceed a preset threshold. If my site does get another surge, it won't take me fifteen hours and tens of thousands of disappointed visitors to realize what's happening.

I also installed the linode iPhone app, so I can check server load in real time.

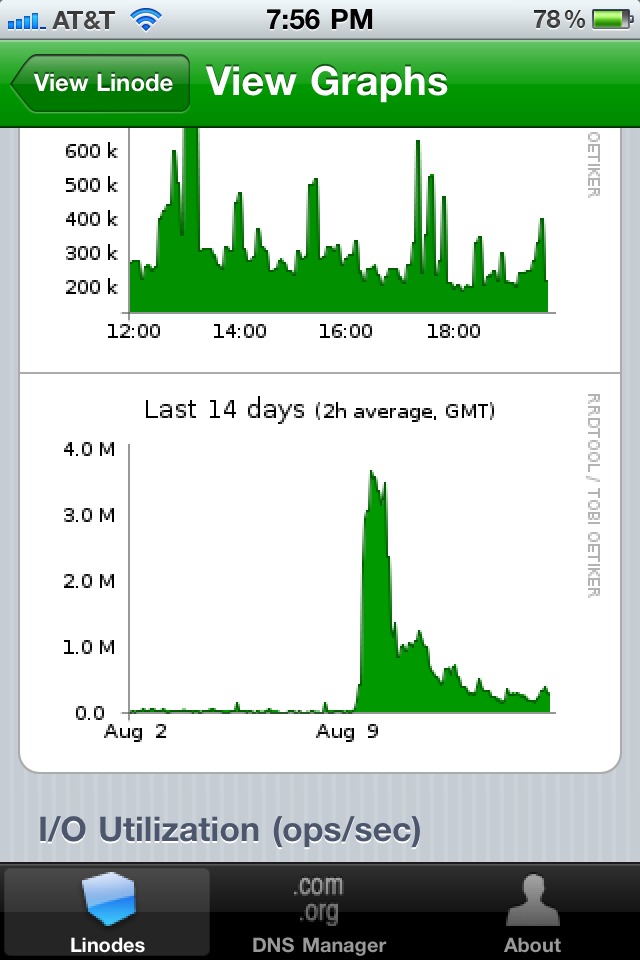

The bandwidth graph could have told me I had a traffic surge:

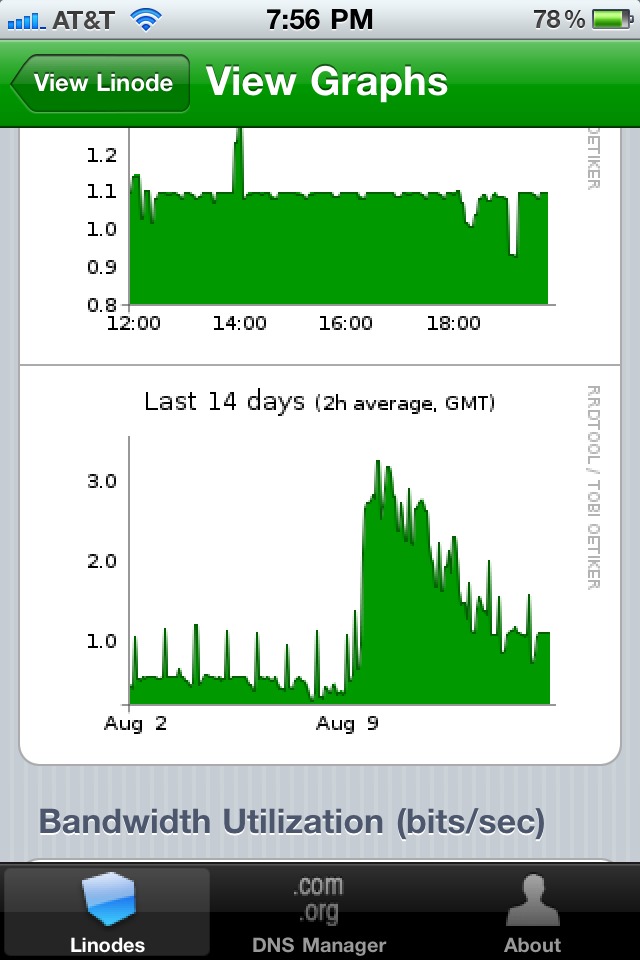

The CPU graph could have told me that I had an artificial bottleneck with threads:

CPU utilization never exceeded 3%!

Step 0: Find the bottleneck

To figure out what was happening, I emptied my browser cache and reloaded the page with firebug running.

The Net panel showed that it took 24 seconds to load the initial page. After that, the remaining files loaded quickly.

I should have realized right away that this behavior meant the server was hamstrung by a thread limit. It took me ten minutes to figure that out.

Step 1: Cut image quality

Since the new post was my first image-heavy post, I realized I could cut bandwidth consumption in half by compressing images.

ImageMagick's convert tool can shrink images at the command line:

$ convert image.ext -quality 0.2 image-mini.ext

I wrote a shell script to compress every image in my images directory.

Then, I did a search-and-replace in emacs to switch everything to the -mini images.

Page load time dropped from 24 to 12 seconds.

Quick and dirty, yet effective.

Step 2: Make content static

I use server-side scripts to generate what are actually static pages.

Under heavy load, dynamic content chews up time.

So, I scraped the generated HTML out of View Source and dropped

it in a static index.html file.

Page load times dropped to 6 seconds. Almost bearable!

Step 3: Adding threads in the apache conf

In firebug, pages were sill bursting in after the initial page load.

On a hunch, I checked my linode control panel. I saw that the CPU utilization graph was near 3%, and that there was plenty of bandwidth left.

Suddenly, I remembered that the default apache configuration sets a low number of processes and threads.

Requests were streaming in and getting queued, waiting for a free thread.

Meanwhile, the CPU was spending 97% of its time doing nothing.

I opened my apache configuration file, found the

mpm_worker_module section, and ramped up processes and

threads:

<IfModule mpm_worker_module>

StartServers 4 # was 2

MaxClients 600 # was 150

MinSpareThreads 50 # was 25

MaxSpareThreads 150 # was 75

ThreadsPerChild 25

MaxRequestsPerChild 0

</IfModule>

Page load times fell to two seconds.

My linode, friend for seven years, hadn't failed me after all.

(Update: 600 is too high for a box with 512 MB RAM when the load gets even more serious, as I discovered a few months after I wrote this post.)

What if there's a next time?

In case my blog ever gets a traffic surge again, I've prepared in advance.

I signed up for amazon web services. With amazon's EC2 service, I'll be able to deploy as many temporary mirrors as I need in just a few minutes.

What's convenient about the EC2 service is that you don't pay a penny until you actually need it. Having the account set up is free insurance.

I've also set up an event-based httpd to serve my static content.

Since serving files is a high-IO, low-CPU activity, event-based httpd servers aren't artificially crippled by having too few threads.

Years ago, lighttpd worked well for me as a static content server, so I'm using it again.

According to a brief survey on twitter, nginx is a great static content server too.

More resources

-

For scaling in the long run, we referenced

Building Scalable Web Sites

a lot in my start-up. I recommend it.

-

I also recommend

High Performance Web Sites

. It covers how to make pages load fast and save bandwidth.

- If you're using any of the popular JavaScript libraries, let Google host them for you.

[1] Thanks go to my colleague Suresh Venkatasubramanian for introducing me to such an apt term.